From Context to Cognition: What QCon London 2026 Taught Us About Building Real AI

Last week our CTO Carlos Blanco attended QCon London and really enjoyed the depth of knowledge and experience shared by both speakers and fellow attendees. As Cirquel’s CTO he was particularly interested in how teams are actually shipping AI into production and came back with a lot to bring back to the team.

Here is a quick recap of the insights that resonated most and why.

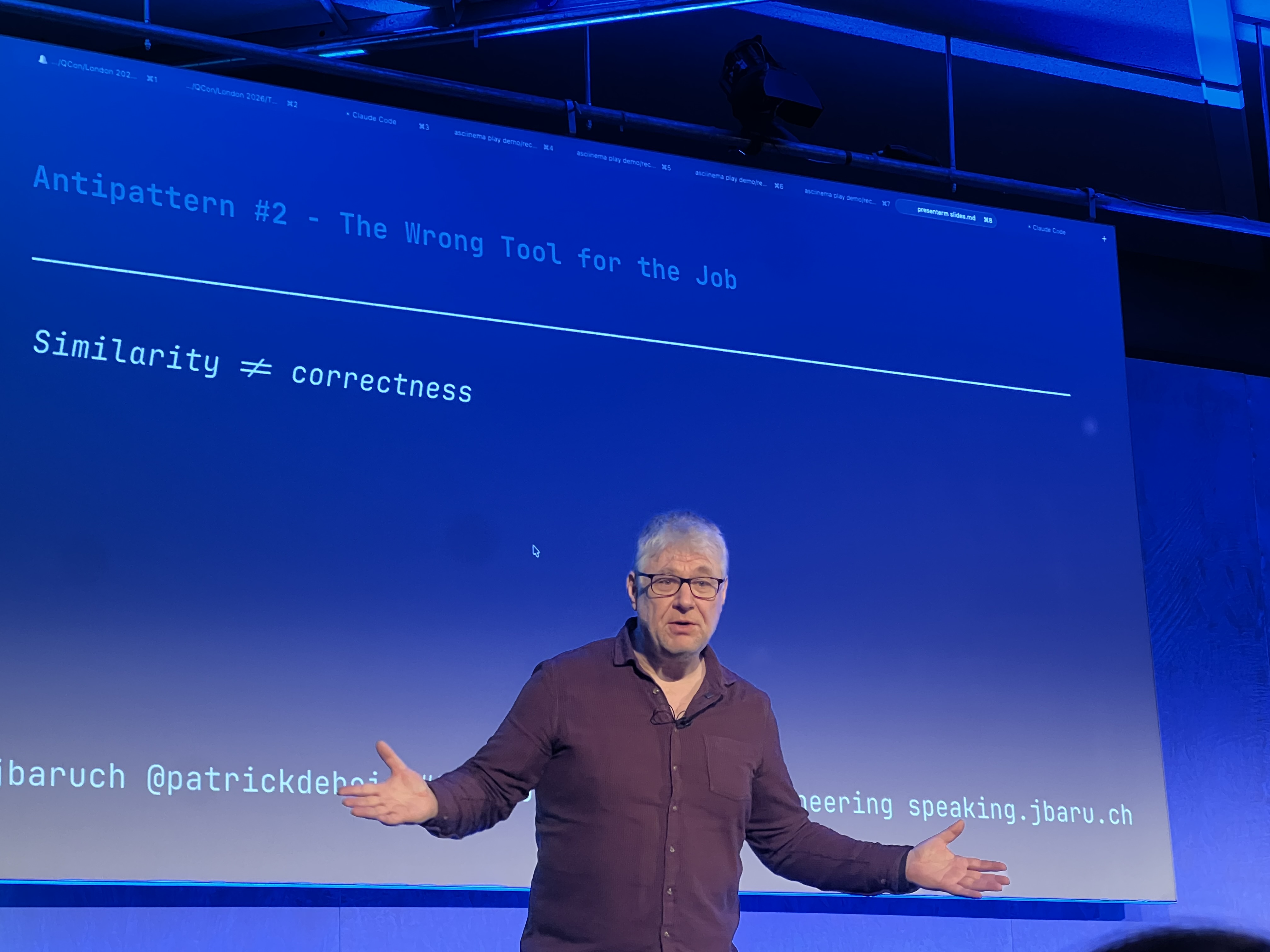

Patrick Debois on stage for “The Right 300 Tokens Beat 100k Noisy Ones: The Architecture of Context Engineering”

Patrick Debois on stage for “The Right 300 Tokens Beat 100k Noisy Ones: The Architecture of Context Engineering”

Context is an engineering problem

One of the sessions Carlos enjoyed most made the case that bigger context windows do not make agents smarter and more documentation does not mean better answers. What actually degrades performance is loading everything into context at once and hoping the model figures out what is relevant.

The alternative is what some people are calling context engineering, building small modular units of context that only get loaded when they are actually needed. The description of each unit is what the agent uses to decide whether to load it at all, so getting that right matters more than the content itself. The results shared backed it up well: 35% accuracy with no structured context versus 98% with properly organised skills, rules and documentation.

Domain depth is the moat

One talk he really enjoyed made a simple argument: the most defensible advantage in AI right now is not your model or your team size but how deeply you understand your industry. Frontier models are pushing aggressively into applied verticals and startups without real domain knowledge to direct the technology are going to find it very hard to compete.

What stood out most was the scale one team is operating at, 22 people supporting hundreds of billions in assets under management and building products that would traditionally require hundreds of people over multiple years. Of course results will vary but the direction of travel is clear.

Retrieval needs proper engineering

One of the most practically useful sessions covered building a research assistant for searching thousands of regulatory documents and walked through everything that went wrong along the way. Two key takeaways:

-

Document parsing: Switching from a standard OCR pipeline to an agentic document understanding approach combining visual reasoning with traditional OCR improved retrieval accuracy by 16 percentage points on its own. It is a foundational step that is easy to underestimate.

-

Recency signals: Semantic similarity retrieves documents that look like the query but not necessarily ones that are current. Encoding recency as a temporal vector and combining it with the semantic embedding at query time lets you account for both at once.

The broader point was to evaluate everything with real user queries, start small and monitor from day one, which is underrated advice that a lot of teams skip early on.

Agents need memory

The final insight came from a talk about building cognitive memory into a large scale AI agent and it addressed something we think about a lot at Cirquel. Language models are stateless and every session starts from scratch even though users expect the system to know them and adapt over time.

The approach shared was to model long term memory as a tree rather than a knowledge graph because trees are cheaper to maintain and much more efficient to update incrementally. After a year in production the results were impressive: 81% fewer profiles reviewed to find a match, 66% higher acceptance rate on AI drafted outreach emails, and 1.5 hours saved per user per task cycle.

What we are taking back

The consistent theme was that shipping AI that actually works in production is an engineering discipline and not just a model choice and the teams doing the most interesting work are the ones with deep domain knowledge.

All of this maps pretty directly to what we are building at Cirquel. How we structure context when assessing a returned fashion item matters more than the model we choose. Our advantage is understanding how a returned garment moves through a supply chain in ways a general purpose model cannot easily replicate. And every time our AI processes a return it generates a signal that over time should make the system genuinely smarter. Context, retrieval and memory are the three things we are most focused on getting right and it was really useful to see how other teams are approaching the same problems.

A big thank you to the QCon team and all the speakers for a genuinely useful three days. We are heading into our pre-seed fundraising round in April 2026 and if you are a founder, investor or engineer interested in where AI meets sustainability we would love to connect at cirquel.co.

Talks referenced

- The Right 300 Tokens Beat 100k Noisy Ones: The Architecture of Context Engineering — Patrick Debois and Baruch Sadogursky, Tessl

- The Realities of Building an AI Native Fintech Startup — David Lin, Linvest21

- Reliable Retrieval for Production AI Systems — Lan Chu, Rabobank

- Beyond Context Windows: Building Cognitive Memory for AI Agents — Karthik Ramgopal, LinkedIn

Carlos Blanco is CTO and co-founder of Cirquel. Follow along on LinkedIn.